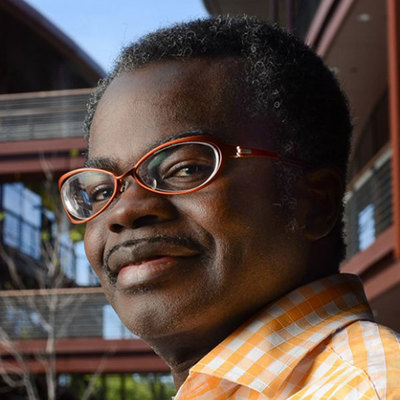

Kwabena Boahen

Stanford University

Talk Title: 3D Silicon Brains

AI’s commercial success in the last decade culminates a shift half-a-century ago from developing newfangled transistors to miniaturizing integrated circuits in 2D. With billions of richly interacting mathematically abstracted neurons, deep nets benefited enormously from this paradigm-shift. But now communicating a neuron’s output uses 1,000× the energy it took to compute it, diminishing the returns of miniaturization and spurring a paradigm-shift from shrinking transistors and wires in 2D to stacking them in 3D. While 3D’s compactness minimizes data movement, surface area drops drastically, severely constraining heat dissipation. Starting from first-principles, I will show how this thermal constraint may be satisfied by reifying the neocortex’s unary coding and order sensing.

While quantum computers reign supreme in scaling computation by using quantum bits and entanglement with superposition, the cortex reigns supreme in scaling communication by integrating unary (as opposed to deep-nets’ binary) signals in dendrites sensitive to temporal order (as opposed to sum-and-threshold). Realizing its supreme scaling will facilitate a nascent 2D-to-3D paradigm-shift and make it feasible to deploy GPT-3—an AI that can intelligently answer questions based on knowledge assimilated from scouring vast troves of information on the internet—on-device. This new field of temporal computing will disrupt binary computing and substantially accelerate IT’s progress for decades.

Bio

Kwabena Boahen (M’89, SM’13, F’16) received the B.S. and M.S.E. degrees in electrical and computer engineering from the Johns Hopkins University, Baltimore, MD, both in 1989 and the Ph.D. degree in computation and neural systems from the California Institute of Technology, Pasadena, in 1997. He was on the bioengineering faculty of the University of Pennsylvania from 1997 to 2005, where he held the first Skirkanich Term Junior Chair. He is presently Professor of Bioengineering at Stanford University, with a joint appointment in Electrical Engineering. He founded Stanford’s Brains in Silicon lab, which develops silicon integrated circuits that emulate the way neurons compute and computational models that link biophysical neuronal mechanisms to cognitive behavior. His research is interdisciplinary, bringing together the seemingly disparate fields of neurobiology and medicine with electronics and computer science. His scholarship is widely recognized, with over ninety publications to his name. These include a cover story in Scientific American featuring his group’s work on a silicon retina and a silicon tectum correctly “wire together” automatically. (May 2005). He has been invited to give over 70 seminar, plenary, and keynote talks. These include a 2007 TED talk, “A computer that works like the brain”, that has been viewed half-a-million times. He has received several distinguished honors, including a Packard Fellowship for Science and Engineering (1999) and a National Institutes of Health Director’s Pioneer Award (2006). He was elected a fellow of the American Institute for Medical and Biological Engineering (2016) and of the Institute of Electrical and Electronic Engineers (2016). In recognition of his group’s work on Neurogrid (2006-12), an iPad-size platform that emulates the cortex in biophysical detail and at functional scale. As this combination hitherto required a supercomputer, Neurogrid resurged interest in neuromorphic computing. These students went on to lead the design of IBM’s TrueNorth chip. In his most recent research effort, the Brainstorm Project, he led a multi-university, multi-investigator team to co-design hardware and software that makes neuromorphic computing much easier to apply. A spin-out from his Stanford group, Femtosense Inc (2018), is commercializing this breakthrough.